Sign up to receive latest insights & updates in technology, AI & data analytics, data science, & innovations from Polestar Analytics.

Editor’s Note- As we stride into 2024, the landscape of data and analytics continues its dynamic evolution. The following trends have been meticulously forecasted based on current trajectories and industry insights. However, the ever-changing nature of technology demands a continuous adaptive approach. Keep in mind that these latest data analytics trends serve as a compass rather than an immutable roadmap, guiding us through the exciting and innovative terrain of data analytics.

Do you often analyze things? Do you overthink, feel overwhelmed by the facts, and attempt to scrutinize every detail from A to Z? Well, you're not alone!

Interestingly, businesses often find themselves in a similar mess. In the modern data-driven world, companies amass an extensive repository of information, from customer preferences and market trends to production metrics and inventory records. Effectively managing and utilizing this data is essential for making informed decisions gene and maintaining a competitive edge, don't you agree?

That's where staying updated with data and analytics trends becomes invaluable for businesses. Organizations can gain a competitive edge by keeping a finger on the pulse of latest data analytics trends and methodologies. Understanding these trends allows companies to derive actionable insights, improve operational efficiency, enhance customer experiences, and identify improvement opportunities.

By implementing the latest trends in data analytics for business organizations, businesses can navigate the maze of data more effectively, streamline their operations, and optimize their decision-making processes. In the current era where information is power, being on the cutting edge of data analytics is not just a benefit; it's a necessity!

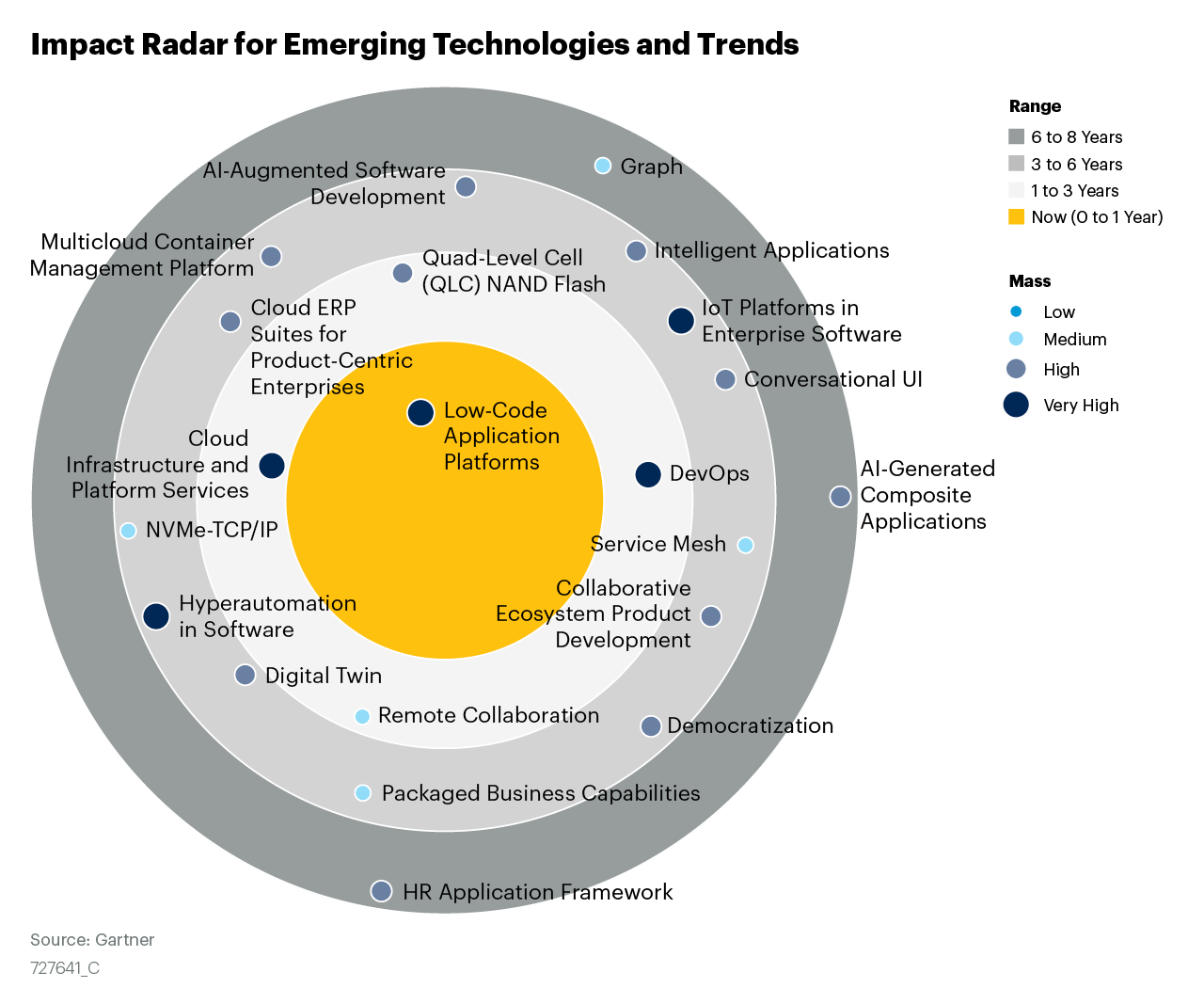

Before hopping on to explore the blog, let's have a quick look at the emerging tech impact radar for data and analytics by Gartner.

As we gaze into 2024, one thing is clear – AI tools are relentless. For instance, Large Language Models (LLMs), such as ChatGPT, will flex their linguistic muscles to generate SQL queries from plain natural language. Yet, why is operationalizing AI for data analytics so significant? Well, it revolves around harnessing the immense potential stemming from the fusion of Artificial Intelligence (AI) and Machine Learning (ML), poised to fundamentally reshape how we approach and analyze data.

AI and ML technologies are currently wowing the world with their incredible ability to extract data from unstructured documents at an astounding accuracy rate of nearly 95%. In a world where approximately 90% of data lacks a well-defined schema, organizations are left to navigate the vast and often chaotic data oceans they accumulate.

Take Netflix's recommendation system, for example. Just as Netflix's recommendation system harnesses AI/ML to curate your personalized watchlist, operationalizing AI applies the same principles to the broader scope of data analytics. It's about automating, optimizing and streamlining the processes that make sense of the data deluge so companies can extract invaluable insights and make informed decisions.

That's how operationalizing AI can be the pillar to propel organizations toward data-driven success. So, as we look forward to 2024, embracing operationalized AI isn't just a choice; it's necessary for those who aim to harness the full potential of their data analytics endeavors.

In 2024 and ahead, organizations will increasingly embrace data literacy as they seek to extract valuable insights from the vast realms of big data, artificial intelligence (AI), and machine learning. Data literacy is not a technology solution; it's a honed skill that holds the key to unravelling your business's data potential. In 2024, data literacy will empower organizations to use data effectively, interpret visualizations, act on insights and handle data responsibly.

According to Gartner, data literacy refers to the skill to read, express, and efficiently communicate data within its context. It involves grasping data origins, structures, analytical techniques, and the capability to articulate the practical applications and benefits. Consequently, numerous businesses are intensifying their focus on enhancing data literacy to align with their evolution into data-centric organizations.

Organizations like Johnson & Johnson are educating employees on advanced technologies, including generative AI, to bridge this knowledge gap. They're leveraging AI-driven skills inference models to effectively harness internal and external data. Many associate data science training programs with the initial step toward becoming data-driven, which is the fundamental transformation, of making data more accessible.

Altogether, this year, we will witness most industries training and upskilling their workforce to become data literate, leading to better data analytics outcomes.

According to Gartner, by 2026, 30% of organizations implementing distributed data architectures will have adopted data observability techniques to improve visibility over the state of the data landscape, up from less than 5% in 2023. In 2024, businesses and industries will increasingly prioritize the ability to observe and analyze data as it’s generated to ensure the performance of data systems. Data observability platforms will track data pipelines, monitor data quality, and provide instant insights.

The shift towards data freshness allows organizations to make informed decisions based on the most present information. Whether monitoring customer behavior or tracking supply chain data, real-time data analysis will be a game-changer to get reliable insights and make better-informed decisions.

It's 2024, and as we live in an ever-evolving data analytics landscape, a groundbreaking trend is taking center stage: the utilization of synthetic data. This virtual data, born from the depths of computer programs, may not be tied to real-world events or individuals, but it's rapidly proving its worth in data analytics.

Gartner's predictions are nothing short of revolutionary – they foresee that a staggering 60% of the data used by AI and analytics solutions will be synthetic data by 2024. The synthetic data trend is a quantum leap toward unlocking the full potential of data analytics, safeguarding privacy, and empowering businesses to thrive in a data-driven world.

For instance, in the healthcare sector, UC Davis Health and Provinzial are pioneering synthetic data for disease forecasting and predictive analytics, respectively, saving time and ensuring privacy. Meanwhile, Vienna City brilliantly harnessed synthetic data to develop various software applications in the public sector, sidestepping GDPR restrictions on using private individuals' data while creating valuable tools for citizens.

Data fabric architecture supports businesses to relentlessly navigate the challenging digital business landscape that generates a lot of unstructured data every minute. It allows companies to adopt a modular approach, referred to as composability, through which companies can integrate new abilities or features as - reusable, low-code, individual components. Unlike the traditional architecture, composability lets businesses to incorporate new features and changes to their enterprise applications without redoing their tech stacks.

As per Gartner, data fabric decreases the deployment time by 30 percent and maintenance time by 70 percent. The capability to reuse capabilities and technologies from multiple data hubs, data warehouses, and data lakes is expected to go a long way in tailoring analytics experiences.

Pioneering the future of data management, the data mesh approach champions for decentralizing data control and ownership. It treats data as a precious asset and establishes specialized domain-centric data teams.

The benefits of data mesh are quite compelling: it eases the burden on storage systems, promotes interoperability, and strengthens security and regulatory compliance. Zhamak Dehghani, the innovator behind data mesh, has introduced Nextdata, her data company designed to assist businesses in adopting Data Mesh architecture and decentralized data product containers.

For instance, JPMorgan Chase Bank's utilization of a data mesh solution with AWS demonstrates the concept in action. Previously, extracting and collating data from multiple domains was a cumbersome process. However, data mesh allows owners to make information accessible via data lakes, creating an enterprise data catalogue. This will lead to seamless data flow between teams' applications and revolutionize data management and decision-making for forward-thinking businesses in 2024.

This trend empowers users across departments to access and utilize data efficiently while maintaining integrity and compliance—a crucial aspect in today’s data-driven decision-making environment. Adaptive data and analytics governance is an organizational ability that allows context-appropriate governance mechanisms and styles to be applied to different data and analytics scenarios to achieve desired business outcomes. Technology-enabled adaptive governance supports composable data and analytics through capabilities that allow flexible governance styles. They can be applied in building, assembling, and reassembling the data-driven business components needed for fast-paced innovation.

By embracing adaptive governance, companies can foster a culture of data democratization without compromising security or compliance. As organizations accelerate and scale their digital business initiatives, ecosystems and platforms, their ability to deliver expected business value and respond to disruption will increasingly rely on effective products that enable adaptive data and analytics governance.

In today's hyper-competitive landscape, the significance of real-time data analysis and filtering cannot be overstated. To surmount this hurdle, prioritizing the adoption of edge computing stands as a critical agenda for businesses. Its ability to deliver instantaneous analytics and filter data presents a cutting-edge and highly effective solution, especially for industries demanding swift and agile responses.

Imagine the Industry 4.0 revolution, where IoT devices are every corner, continuously generating data. According to Gartner, more than 50% of crucial data will soon be managed beyond the confines of traditional data centers and clouds, gravitating toward edge computing. What fuels this swift change? Well, the world presently churns out over 64 zettabytes of data annually, a number projected to soar to 180 zettabytes by 2025. The conventional cloud struggles to cope with this immense influx of data, necessitating lightning-fast processing for it to be truly valuable. In today's landscape, constantly shuttling data to and from the cloud feels outdated; the remedy lies in embracing edge computing.

So, businesses must leverage edge analytics to accelerate tasks and save invaluable time and resources to enhance productivity and fully leverage the edge computing revolution.

Prompt Engineering emphasizes the need for quick, iterative development and deployment of data pipelines and analytics solutions. It focuses on reducing the time-to-insight by streamlining engineering processes and tools. Advanced automation tools powered by AI and machine learning are at the core of prompt engineering. These tools automate daily tasks, such as data cleansing, normalization, and feature engineering, allowing data engineers and scientists to focus on higher-value tasks that require human expertise.

By minimizing manual intervention and leveraging automated engineering workflows, companies can significantly reduce the time to turn raw data into actionable insights. Prompt engineering enhances productivity and facilitates agile decision-making in response to rapidly changing business dynamics.

Decision Intelligence (DI) is a data analytics (DA) discipline that analyzes the sequence of cause and effect to curate decision models. These decision models visually represent how actions lead to outcomes by investigating, observing, contextualizing, modeling, and executing data. Decision Intelligence helps make faster and more accurate decisions that result in better outcomes.

By combining data-driven insights with human expertise, decision intelligence empowers organizations to make more informed, timely, and strategic decisions across various domains and sectors. Gartner forecasts that one-third of large corporations will leverage DI in the next two years to augment their decision-making skills.

We help you generate deep actionable insights by tapping into data you didn’t even know you had.

Therefore, 2024 heralds a transformative phase in data analytics, where organizations witness a convergence of technologies and methodologies aimed at unlocking the true potential of data. Embracing these trends empowers organizations to navigate the challenges of the digital landscape, drive innovation, and gain a competitive edge in an accelerating data-centric world. As we venture further into this data-driven era, these trends will continue to reshape the future of data analytics in unforeseen yet exciting ways.

About Author

Content Architect

The goal is to turn data into information, and information into insights.