Sign up to receive latest insights & updates in technology, AI & data analytics, data science, & innovations from Polestar Analytics.

Editor's note: We are back with another blog as we explore the fascinating realm of data streaming from IoT devices and the game-changing storage solutions of data lakes. Get ready to ride the wave of innovation, where information flows seamlessly and insights abound. Discover how this convergence of technology is shaping the future and unlocking endless possibilities. So, grab your virtual surfboards and dive into the exhilarating world of IoT data!

The Internet of Things (IoT) has revolutionized how devices communicate and gather data. With the proliferation of IoT sensors, organizations have an unprecedented opportunity to harness valuable insights from the vast amounts of data generated.

The general population like us benefits from the interplay of sensors and connectivity, but it is a series of complex analyses and decision-making at the granular level that’s making it all happen. Enter data streaming and data lakes, two powerful concepts that enable organizations to capture, process, and analyze IoT data in real-time. In this blog, we'll embark on a captivating journey, exploring the process of data lake streaming. Buckle up and get ready to dive into the depths of data lakes!

Before diving into the finer details involving Iot data streaming and data lakes, let's first register the significance of IoT sensors. These small but mighty devices act as the eyes and ears of the digital realm, gathering and transmitting data from various sources. From environmental sensors that measure temperature and humidity to industrial sensors monitoring machine performance, IoT sensors provide a continuous stream of valuable information. Their ubiquity and ability to gather real-time data make them indispensable for numerous industries, including manufacturing, healthcare, agriculture, and smart cities.

According to a report by Gartner, the number of connected IoT devices is projected to reach 25 billion by 2025, highlighting the staggering growth and potential impact of IoT sensors. Additionally, a study conducted by IDC predicts that IoT-generated data will exceed 79.4 zettabytes by 2025, emphasizing the sheer volume of data that organizations need to handle and leverage effectively.

IoT Data streaming forms the backbone of the real-time data processing infrastructure. Unlike traditional batch processing, where data is collected and processed in large chunks, data streaming focuses on the continuous flow of data. It involves ingesting, processing, and analyzing data in real time, enabling organizations to respond swiftly to emerging trends or anomalies. Data streaming frameworks, such as Apache Kafka and Apache Flink, act as the conduits that transport data from IoT sensors to subsequent stages in the data pipeline.

According to a survey conducted by Databricks, 80% of organizations consider real-time streaming analytics important for their business strategies. This statistic underscores the growing recognition of the value and impact of data streaming in driving actionable insights and informed decision-making.

Source: Analytics Vidhya

One of the key challenges lies in effectively managing this data influx and extracting meaningful information.

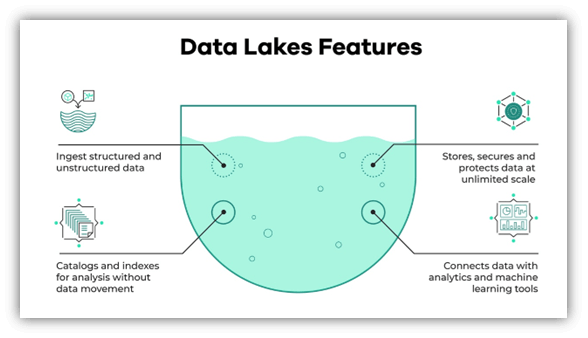

A data lake, in essence, is a centralized repository that stores vast amounts of raw and unprocessed data. It provides a flexible and scalable infrastructure for organizations to store both structured and unstructured data. Unlike traditional data warehouses, which impose rigid schemas and predefined structures, data lakes accommodate diverse data types and allow for data exploration and discovery. By embracing the schema-on-read approach, organizations can defer data transformation until analysis is required, providing agility and flexibility in the data processing.

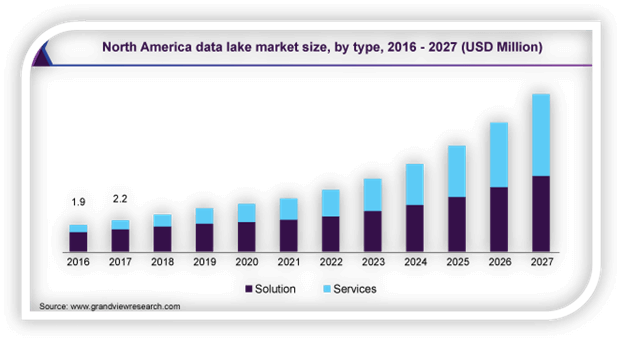

North America data lake market size report by MarketsandMarkets estimates that the global data lakes market size will reach $20.1 billion by 2026, with a compound annual growth rate (CAGR) of 20.6%. This exponential growth highlights the increasing adoption of data lakes as organizations recognize their potential to handle the complexities and scalability requirements of IoT-generated data.

Now, let's connect the dots and explore how data streaming seamlessly integrates with data lakes. The process begins with IoT sensors continuously generating data, which is then ingested by data streaming frameworks like Apache Kafka.

These frameworks act as intermediaries, providing fault-tolerant, scalable, and highly available platforms to handle the streaming data. From there, the data can be processed, transformed, and enriched in real-time using stream processing engines like Apache Flink or Apache Spark. These engines enable operations such as filtering, aggregation, and complex event processing, ensuring that only relevant data is passed downstream.

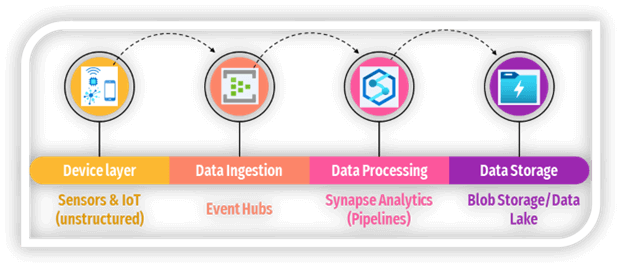

The architecture involves multiple layers as we look at the data journey from IoT sensors to the Data Lake:

Data engineering teams can set up robust, cloud computing-based pipelines to stream data from IoT sensors to a centralized data repository, such as a data lake. Leading cloud service providers offer storage solutions like Amazon S3, Google Cloud's object storage, and Microsoft's Azure Blob Storage which are scalable and cost-effective. Data stored in the repository can be used for automation models, analytics, and transformations using frameworks like Apache Spark. Furthermore, data can be loaded into data warehouses like Snowflake, Amazon Redshift, or Google's BigQuery for convenient SQL-based data manipulation and analysis.

Take advantage of Apache Spark pools to clean, transform, and analyze the streaming data, and combine it with structured data from operational databases or data warehouses.

1. IoT devices layer

These are the physical devices, sensors, or machines that generate data. They could be various IoT devices such as sensors, cameras, actuators, or even industrial machinery.

The IoT devices layer in the architecture, also known as the perception layer or the edge layer, is the layer that supports sensors and installed systems that are used to collect data and perform tasks in the physical world. The IoT devices layer is responsible for data acquisition, data processing, and device management. Data acquisition involves collecting data from various sources and sending it to the next layer for further analysis. Data processing involves applying filters, aggregations, transformations, or machine learning models to the data at the edge to reduce latency, bandwidth, or cost. Device management involves monitoring, updating, configuring, or securing the devices remotely.

2. Data Ingestion layer

Azure Event Hubs: Messaging solution for ingesting millions of event messages per second. The captured event data can be processed by multiple consumers in parallel. While Event Hubs natively supports AMQP (Advanced Message Queuing Protocol 1.0), it also provides a binary compatibility layer that allows applications using the Kafka protocol (Kafka 1.0 and above) to process events using Event Hubs with no application changes.

Azure IoT Hub: Provides bi-directional communication between Internet-connected devices, and a scalable message queue that can handle millions of simultaneously connected devices.

Apache Kafka: Open-source message queuing and stream processing application that can scale to handle millions of messages per second from multiple message producers, and route them to multiple consumers. Kafka is available in Azure as an HDInsight cluster type, with Azure Events for Kafka, and also available via ConfluentCloud through our partnership with Confluent.

3. Data Processing

Azure Stream Analytics: Azure Stream Analytics can run perpetual queries against an unbounded stream of data. These queries consume streams of data from storage or message brokers, filter and aggregate the data based on temporal windows, and write the results to sinks such as storage, databases, or directly to reports in Power BI. Stream Analytics uses a SQL-based query language that supports temporal and geospatial constructs and can be extended using JavaScript.

Spark Streaming: Apache Spark is an open-source distributed platform for general data processing. Spark provides the Spark Streaming API, in which you can write code in any supported Spark language, including Java, Scala, and Python. Spark 2.0 introduced the Spark Structured Streaming API, which provides a simpler and more consistent programming model. Spark 2.0 is available in an Azure HDInsight cluster.

4. Data Storage

Azure Storage Blob Containers or Azure Data Lake Store:

Incoming real-time data is usually captured in a message broker, but in some scenarios, it can make sense to monitor a folder for new files and process them as they are created or updated. Additionally, many real-time processing solutions combine streaming data with static reference data, which can be stored in a file store. Finally, file storage may be used as an output destination for captured real-time data for archiving, or for further batch processing in lambda architecture.

Data Lake services to help you build a robust, secure & scalable data management platform.

1. Data preprocessing and cleansing: Data analytics techniques can be used to preprocess and cleanse the streaming data before it is stored in the data lake. This involves handling missing values, removing duplicates, normalizing data formats, and addressing data quality issues. By ensuring data cleanliness and consistency, analytics can improve the accuracy and reliability of subsequent analyses.

2. Real-time data aggregation and summarization: Data analytics allows for real-time aggregation and summarization of streaming data as it enters the data lake. This involves calculating metrics such as averages, counts, sums, or time-based aggregations. Aggregated data provides a condensed view of the streaming data, making it easier to analyze and derive insights from large volumes of information.

3. Complex event processing: Data analytics techniques, including complex event processing (CEP), can be applied to IoT streaming data in real-time. CEP involves identifying and analyzing complex patterns, sequences, and relationships within the data stream. This enables the detection of critical events, anomalies, or specific conditions that require immediate action.

4. Anomaly detection: By applying predictive analytics models to the streaming data, patterns, and anomalies can be identified. Predictive models can be trained on historical data to make predictions about future events or behavior. Anomaly detection algorithms can identify deviations from normal patterns in real time, enabling proactive measures to be taken when unusual events occur.

5. Data correlation and contextual analysis: Data analytics helps in correlating IoT streaming data with other relevant data sources. This contextual analysis provides a broader understanding of the data, such as correlating sensor data with weather conditions or customer behavior. By analyzing data in context, businesses can uncover deeper insights and make more accurate predictions.

6. Machine learning and AI algorithms: Data analytics leverages machine learning and AI algorithms to extract insights from IoT streaming data. These algorithms can identify patterns, make predictions, classify data, and perform other advanced analyses. By continuously learning from the streaming data, machine learning models can improve their accuracy and provide real-time insights.

7. Visualization and interactive exploration: Data analytics tools offer visualization capabilities that allow stakeholders to explore and interact with the streaming data. Visualizations, such as charts, graphs, and dashboards, provide a visual representation of the data, making it easier to identify trends, outliers, and patterns. Interactive exploration enables users to drill down into the data, filter information, and gain a deeper understanding of the IoT streaming data.

The seamless integration of data streaming and data lakes has revolutionized how organizations unlock the value of IoT sensor data. By establishing a robust data streaming pipeline, organizations can ingest, process, and analyze data in real time, enabling timely decision-making and actionable insights.

Data lakes, on the other hand, provide a scalable and flexible storage solution that accommodates the deluge of IoT data, allowing organizations to explore and discover hidden patterns. The synergy between data streaming and data lakes sets the stage for transformative applications across industries, from predictive maintenance in manufacturing to precision agriculture in farming.

The capacity to collect and analyse data produced by IoT sensors becomes a strategic necessity for organisations to acquire a competitive edge as the IoT era unfolds. According to a McKinsey report, organizations that use IoT data to guide their decision-making processes report a 10-15% improvement in operating margin. Organizations can confidently navigate through the data-driven landscape by leveraging the data captured from IoT devices to a data lake. So, get ready to surf the data tsunami and unleash the IoT ecosystem's latent potential!

About Author

Sports and Tech Enthusiast

In a world of opinions and cold numbers, data tells a compelling story.